Why the Pentagon Is Looking at Gamers, Ground Robots, and a New Kind of Trigger Puller

FORGED // Defense & National Security | November 14, 2027

By Talion Camisade, Defense Correspondent

There is a moment in any gunfight, the special operations community will tell you, where the math stops working in your favor. Your men are in the stack. The building is hardened. The intelligence is good but not perfect. And every second you spend pushing through that fatal funnel is a second the target has to kill your best people.

For most of the last two decades, the answer to that math problem was better training, better kit, and tier-one operators skilled enough to absorb the calculus of close-quarters violence and come out ahead. That answer still works. But it’s getting expensive — in lives, in training pipelines, and in the increasingly fraught political arithmetic of how America chooses to spend its most irreplaceable military assets.

A quieter answer is starting to emerge. And it is coming, of all places, from the direction of Ukraine — and from a generation of young Americans who grew up with a controller in their hands.

LESSONS FROM THE KHARKIV CLASSROOM

By mid-2025, the Ukrainian military had done something that would have seemed implausible to any conventional military theorist a decade earlier: it had effectively built one of the world’s most lethal drone forces out of civilian components, volunteer labor, and a talent pool that Western militaries had largely ignored.

What Ukrainian commanders discovered — and documented with enough operational rigor to get the Pentagon’s attention — was not simply that cheap drones were effective. It was who made them most effective.

The correlation was uncomfortable for military tradition but impossible to ignore: the operators consistently posting the highest kill-to-sortie ratios, the best target acquisition times, and the lowest fratricide rates were disproportionately drawn from competitive gaming backgrounds. Not former pilots. Not trained soldiers who’d been handed a controller. Gamers. Drone racers. People who had spent tens of thousands of hours developing the kind of spatial cognition, latency tolerance, and hand-eye coordination that traditional military training not only didn’t produce — it didn’t even know to ask for.

“We kept giving these kids aptitude tests designed for artillery officers,” one Ukrainian drone unit commander told a NATO assessment team in late 2025, according to a summary shared with allied partners. “Then we figured out the test was wrong.”

The U.S. Army took notes.

THE PENTAGON GETS UNCOMFORTABLE

In 2026, the Army’s Futures Command quietly stood up what insiders have taken to calling the “Synthetic Warfighter Study” — a multi-year research initiative examining how teleoperated ground systems, specifically bipedal and quadrupedal unmanned ground vehicles (UGVs), could be integrated into small direct-action units without crossing the legal and ethical tripwires that have, so far, prevented fully autonomous lethal systems from seeing operational deployment.

The distinction matters enormously, and not just to lawyers.

Current DoD policy — codified in Directive 3000.09 and not substantially revised despite years of pressure — requires meaningful human control over any use of lethal force. Fully autonomous weapons, robots that select and engage targets without a human decision in the loop, remain prohibited for conventional deployment. That policy reflects not just legal reality but political reality: the American public, and most of America’s allies, are not prepared to watch a robot kill someone on the evening news without a human having pulled the trigger.

But teleoperation is a different animal entirely.

A robot that a skilled human operator is actively controlling — with real-time sensor feeds, responsive actuators, and sufficient situational awareness — is not an autonomous weapon. It is, in the eyes of U.S. policy, closer to a sophisticated weapon system. And weapon systems, even extremely capable ones, are legal.

“The question stopped being ‘can we build a robot that can clear a room,'” said one defense industry source familiar with the research, who spoke on condition of anonymity because the program remains sensitive. “The question became, ‘can we build an operator who can clear a room through a robot?’ That reframe opened a lot of doors.”

THE CONTROLLER QUESTION

What those doors revealed, according to multiple sources familiar with the research, was another uncomfortable overlap with the Ukrainian experience.

Military UGV programs have spent years developing proprietary control interfaces — ruggedized, purpose-built, and often deeply unintuitive for anyone who didn’t spend months in a specific training pipeline. The interfaces were built by engineers optimizing for redundancy and fault tolerance. They were not built by people who understood how human beings achieve peak spatial performance under stress.

The gaming industry, it turns out, had been solving that problem for thirty years.

The modern game controller — evolved through millions of hours of user feedback, competitive refinement, and neurological research into response latency and motor learning — is arguably the most optimized human-machine interface ever developed for rapid, precise, spatial decision-making under pressure. When Army researchers began testing experienced UGV operators against subjects with deep competitive gaming backgrounds, using modified commercial controller interfaces, the results were striking enough to accelerate the study’s timeline.

The follow-on phase, now reportedly underway, is examining a layered control architecture that combines traditional analog inputs with what researchers are describing as “command augmentation” — a system that integrates murmured voice commands for mode switching and target designation, eye-tracking for threat prioritization, and early-stage brain-computer interface (BCI) technology for reflex-speed actions that don’t have time to travel through the deliberate motor pathway.

Think of it as the difference between consciously deciding to duck and just ducking. The goal is to move certain operator responses to the same part of the nervous system that handles the latter.

“You’re not trying to make the operator think faster,” one researcher familiar with the project explained. “You’re trying to reduce the distance between what they perceive and what the system does about it. The latency in a conventional controller is cognitive, not mechanical.”

SMALL UNITS, BIG TEETH

The operational concept emerging from these research threads is not the autonomous robot army of science fiction. It is something more tactically specific — and, in some ways, more immediately disruptive.

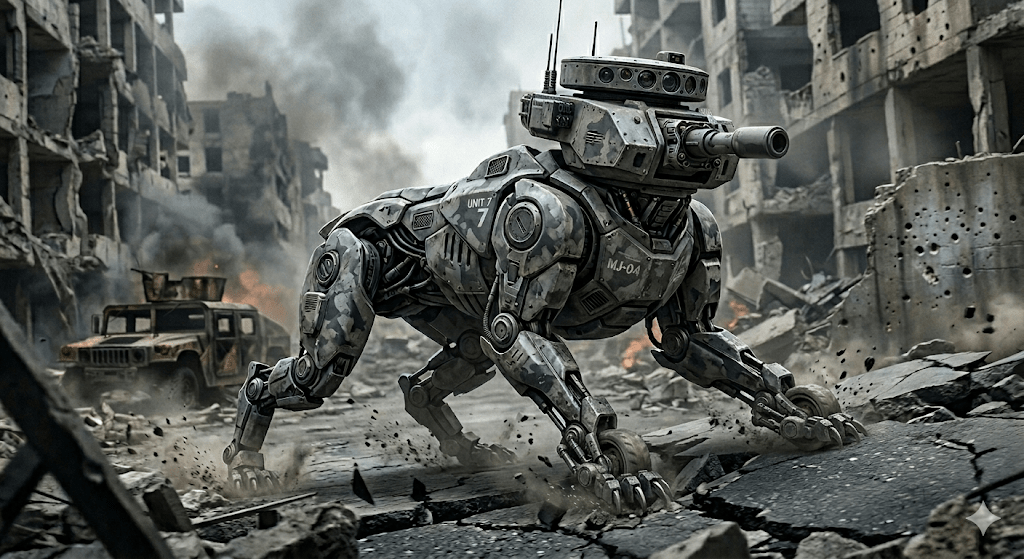

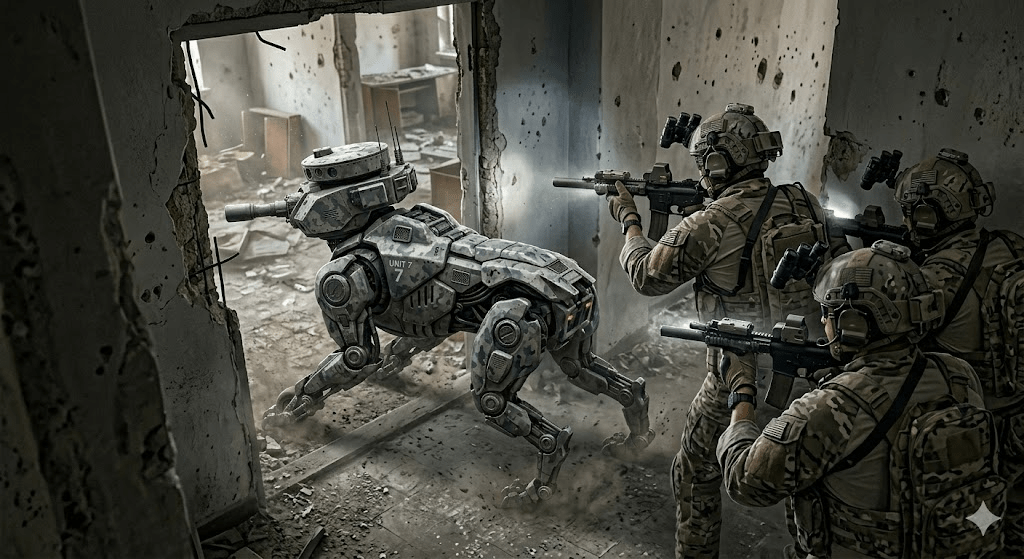

The model, as sources have described it, envisions small direct-action teams — think four to six operators — augmented by two to four teleoperated UGVs of varying form factors. Bipedal platforms offer the ability to navigate human-built environments: stairs, doorways, vehicles. Quadrupedal platforms offer speed, stability over rough terrain, and payload capacity for sensor packages or direct-fire systems.

Critically, the UGVs are not intended to replace the human operators. They are intended to go first.

In a hardened-target scenario where intelligence is incomplete — where you know the building is occupied and hostile but you don’t know exactly how — sending a teleoperated platform through the door first changes the calculus entirely. You lose a robot instead of a man. You gain sensor data. You degrade the defender’s position before your people cross the threshold. And you do it with a human being in the loop who, if they’re the right kind of operator, can make targeting decisions in the fraction of a second between breach and engagement.

Battery life, the perennial limitation of autonomous systems, becomes substantially less of a constraint in this environment. A CQB clearance operation may last minutes. Urban terrain limits the distances involved. Unlike an ISR drone loitering for hours over a target area, a bipedal UGV clearing a floor doesn’t need to outlast the sun.

The model also partially addresses a persistent vulnerability of tier-one forces: the asymmetry between what it costs to produce a trained special operations soldier and what it costs to replace one. A Ground Mobility Vehicle can be rebuilt. An eighteen-year operator pipeline cannot.

THE PRIVATE SECTOR IS ALREADY MOVING

The Pentagon is not the only institution looking at this problem.

The same technology convergence — capable UGVs, mature teleoperation platforms, a generation of operationally fluent gamers — has drawn interest from the private security and defense contracting sector, where the regulatory environment is different and the risk tolerance, at least for experimentation, is often higher.

Several defense startups, none of whom agreed to speak on the record, are reportedly developing integrated teleoperation systems for commercial clients that fall outside the traditional PMC market: infrastructure protection, high-value asset security in contested environments, and what one industry observer described as “the gap between what law enforcement can do and what military law allows.”

Whether any of these ventures will produce operationally meaningful capability — or whether they’ll become cautionary tales about the gap between prototype and deployment — remains to be seen.

What is not speculative is the underlying talent pool. Somewhere in the United States right now, there are nineteen-year-olds logging their ten-thousandth hour on a game controller, developing spatial cognition and reflex-response loops that no military training program currently exists to cultivate or capture.

The Ukrainian experience suggests that the first institution to build a pipeline from that pool to an operational platform will have discovered something important about what the next decade of ground combat looks like.

The race, quiet as it currently is, appears to be on.

Talion Camisade covers defense technology and national security for FORGED. He has reported from Ukraine, Poland, and the Indo-Pacific. Contact: mwren@forgedmedia.com

FORGED does not receive funding from defense contractors or government sources. We are reader-supported. If you find this reporting valuable, consider a paid subscription.